Fullsend Technology

The Problem

Small business owners know they need content marketing, but they don't have the time, expertise, or budget to do it well. Figuring out what to write about, actually creating the content, optimizing it for search, and distributing it across platforms is a full-time job most of them can't afford to hire for.

What We Built

Fullsend is an AI-native content automation platform that handles the entire content marketing workflow, from research to publishing.

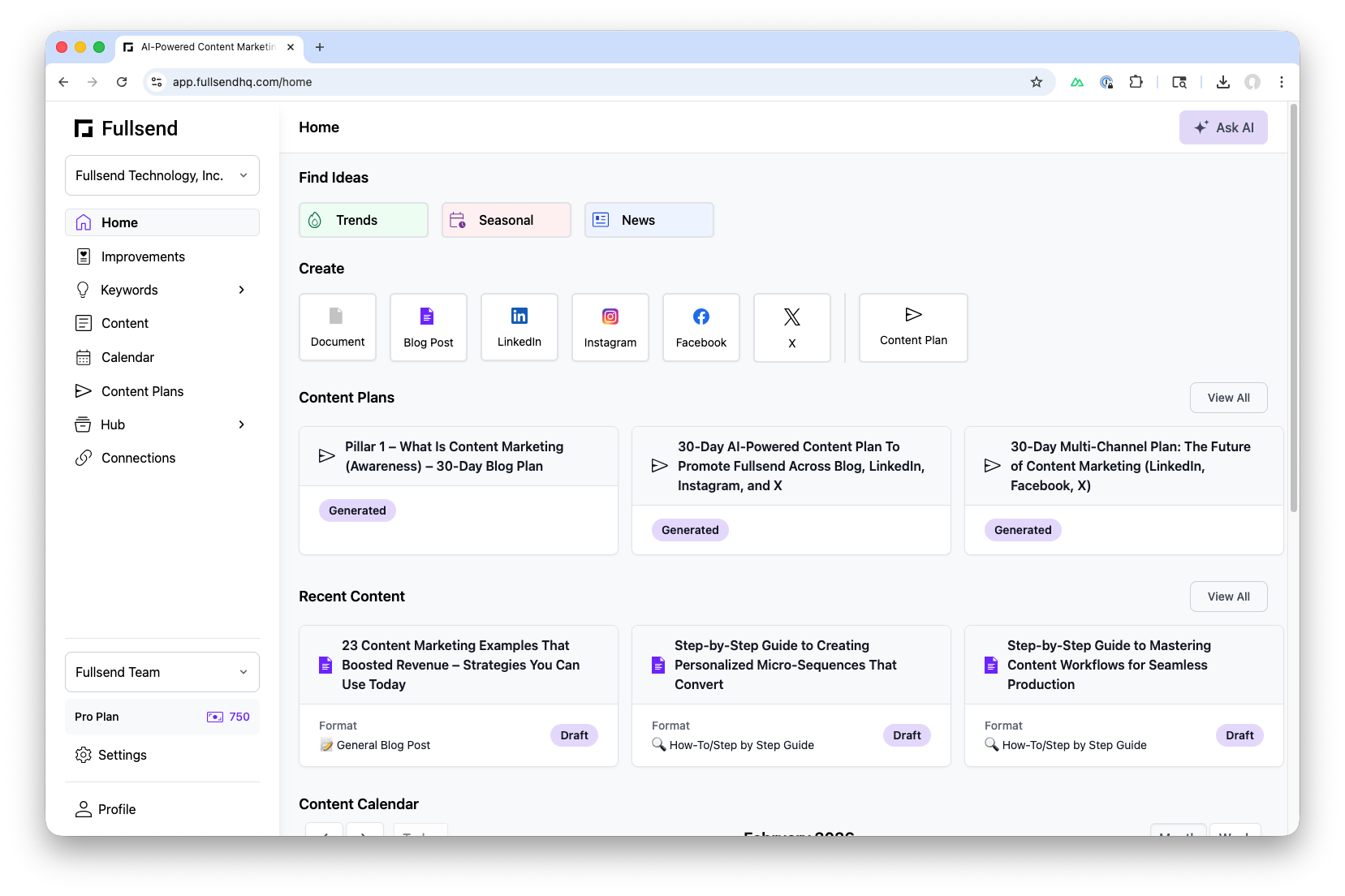

Here's how it works:

- Brand Discovery. A business owner enters their website URL. Our AI agent scrapes the site and builds a brand profile that the owner can review and edit.

- Audits & Research. The agent runs a technical SEO audit and a content audit, then uses the MCP integrations to find keyword opportunities related to the brand.

- Content Strategy. Based on audit results, the agent surfaces content suggestions: topics the business should be writing about to get discovered.

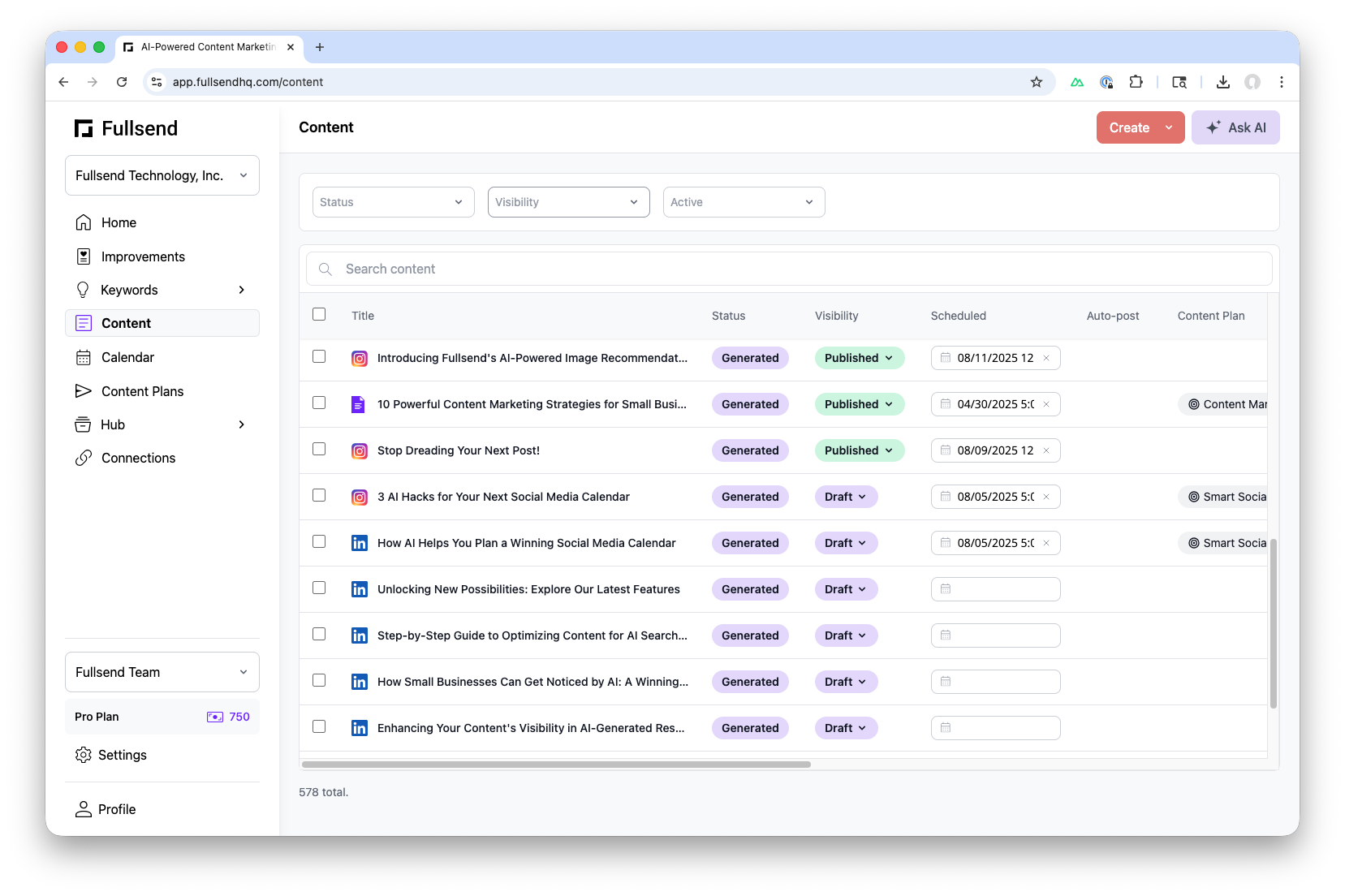

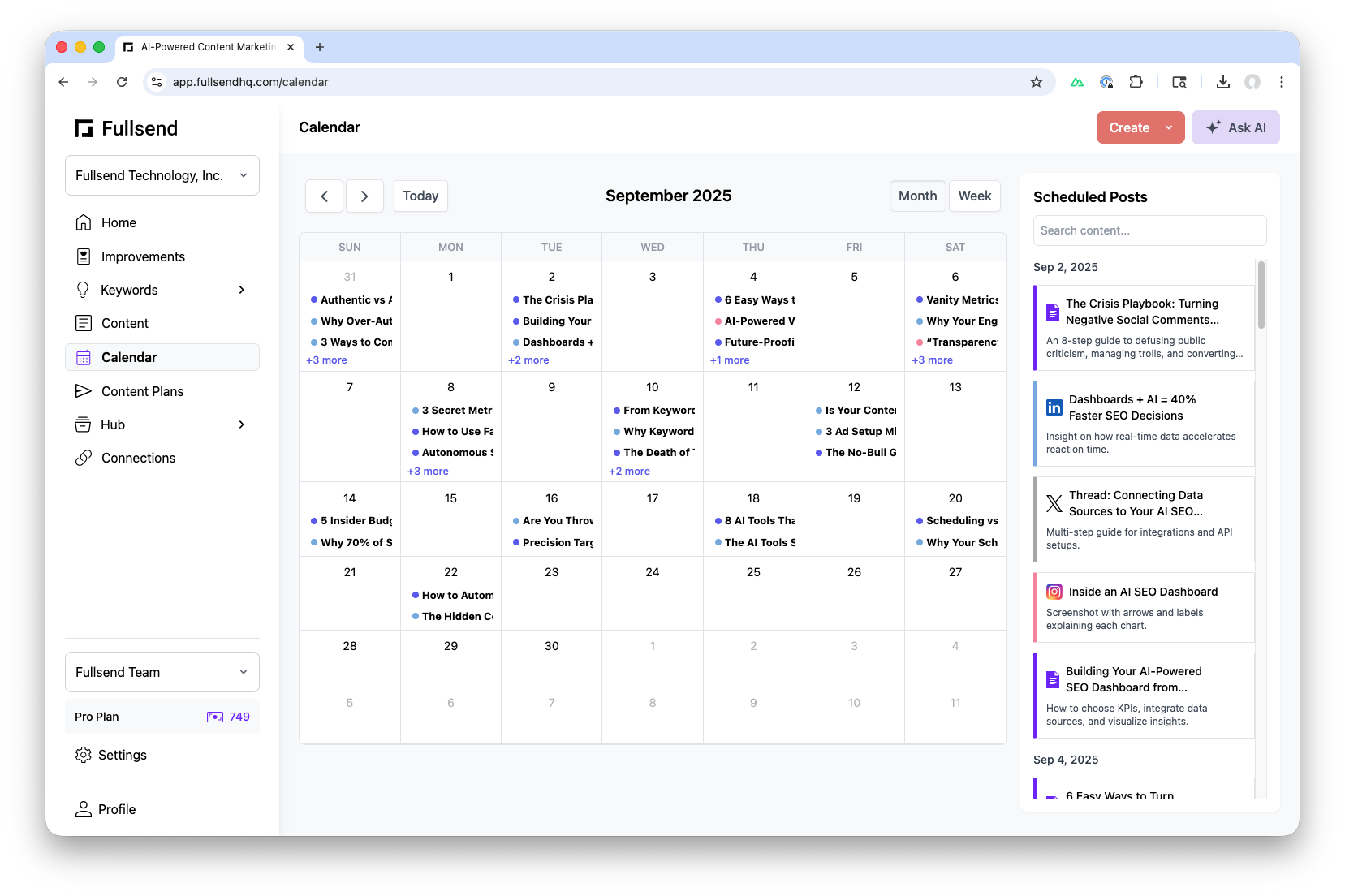

- Content Generation. When the user selects a topic, agentic workflows generate drafts that are automatically critiqued and revised before the user ever sees them. These can be blog posts or social media posts.

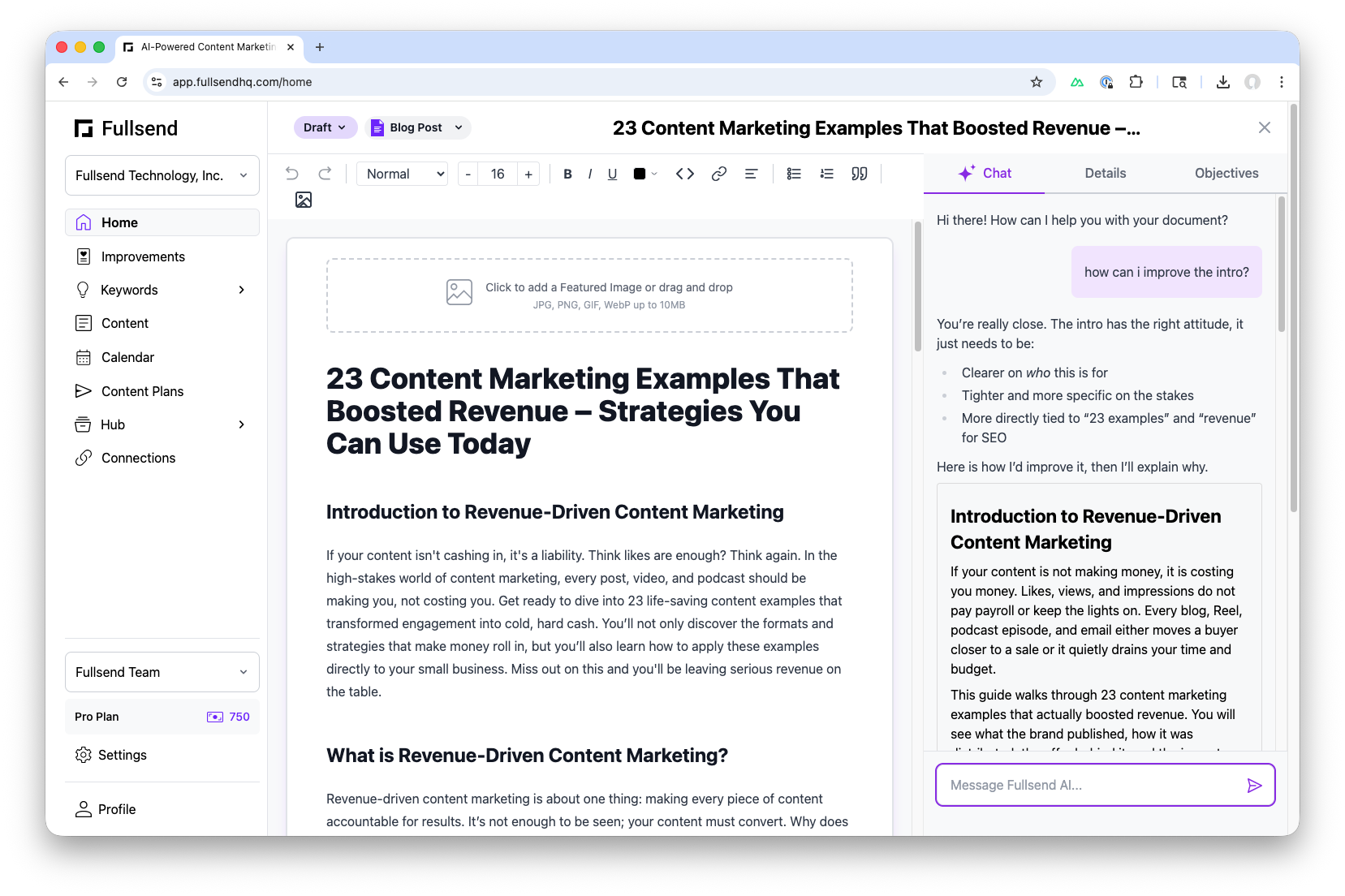

- Editing. A built-in content editor lets users refine the output, with an AI assistant available to help make edits in real time.

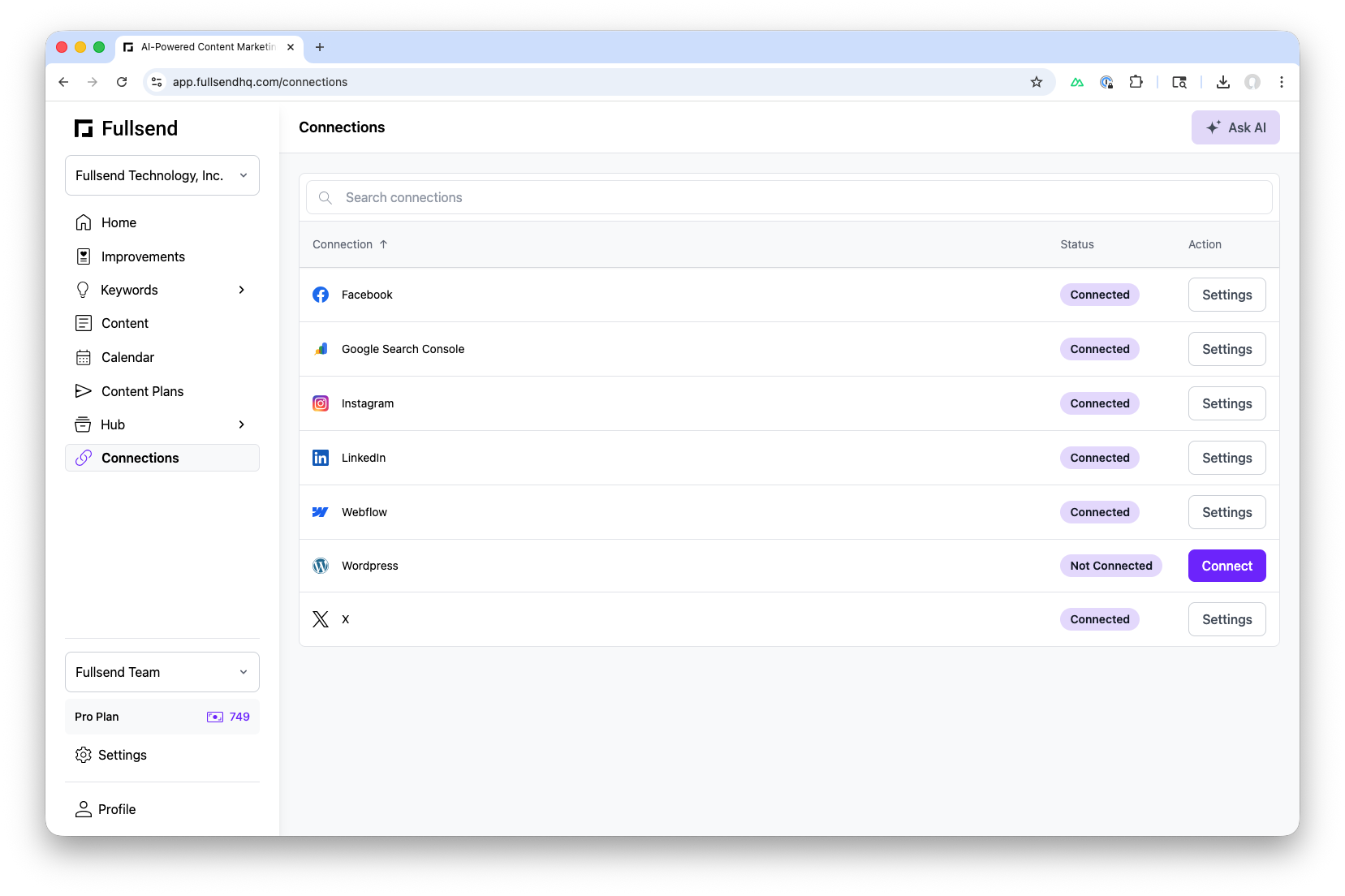

- Publishing. Content can be published directly to the user's website or social media channels from within Fullsend.

Technical Decisions

I started with CrewAI but switched to OpenAI's Agent SDK early on. The decision came down to a few things:

- Speed of evolution. OpenAI's SDK was shipping new capabilities faster than CrewAI could keep up. I wanted access to web search, file search, RAG, reasoning models, and structured output as soon as they launched.

- Latency. CrewAI was formatting JSON output via prompt engineering and parsing the LLM's text output, rather than using built-in structured output. This introduced a noticeable latency penalty.

- MCP integrations. I needed tight integration with Fullsend's agents and external data sources and MCP server integrations gave me a clean way to do that.

One of the most interesting challenges wasn't purely technical. It was figuring out when to use workflows versus agents. Workflows are great for repeatable, structured automation (like the generate-critique-revise loop). Agents are better when a task requires reasoning and decision-making (like analyzing audit results and recommending content strategy). Getting that boundary right shaped the entire architecture.

On the UX side, we learned that users need both a conversational interface and structured artifacts. Chat alone isn't enough. People want to iterate on tangible outputs like content plans and draft posts. We built an experience where the chat and the editor work together, similar to how coding agents produce code you can then refine.